For years, the rule was simple: if you ranked on Google, you existed. That rule still holds, but it is no longer the whole story. ChatGPT, Claude, Perplexity, and Gemini now read websites on their own, summarize the content, and deliver answers directly to users. No click. No visit. No notification.

The question is no longer just: does your website rank on Google? It is also: does an AI agent understand what your site is about when it visits? Cloudflare recently introduced a term for exactly this and released a free tool: Agent Readiness. We tested it on one of our own projects and learned a few things along the way.

What is Agent Readiness?

Agent Readiness describes how well a website is prepared for visits from AI agents. In other words, it is no longer just about human visitors and traditional search crawlers, but also about autonomous systems that read, understand, and reuse your content.

The analogy is straightforward: twenty years ago, you had to optimize your site for search engines, and we called that SEO. Today, AI agents are part of the picture, and that means AEO: Answer Engine Optimization. The principles are related, but the requirements are new. An agent does not care about your beautiful images or elaborate animations. It wants structured, machine-readable information it can extract quickly and clearly.

Ignore this and you risk exactly what poor SEO used to mean: being invisible. The difference is that the user is not searching on Google anymore. They are asking ChatGPT or Claude.

The Cloudflare tool: isitagentready.com

Cloudflare released isitagentready.com, a free tool that measures exactly that. Enter your URL, and within seconds you get a score between 0 and 100 plus a rating across five levels, from “Not ready” to “Agent-Native.” You also get a clear breakdown of what is missing and what to do next.

The tool checks three dimensions that make up the overall score. None of them are especially complex to implement, but very few websites have them in place. That is exactly where the opportunity lies: act now, and you get a clear head start.

The three dimensions of Agent Readiness

1. Discoverability – can your site be found?

Before an AI agent can understand your content, it has to find it first. The tool checks three classic signals: a correct robots.txt, a valid sitemap.xml, and a Link header in your HTTP responses. None of this is new, but many websites still have gaps or outdated entries that slow agents down.

Discoverability is the foundation. Without it, even the best content will not help. Many of these points overlap with classic technical SEO. If you have already done that work, you are ahead of the game.

2. Content – can the agent read your content efficiently?

The content check is the most interesting part. It focuses on two things: llms.txt and Markdown content negotiation.

llms.txt is a new convention, similar to robots.txt but designed for AI systems. In a single file, you provide a structured overview of your most important content, giving agents a shortcut so they do not have to crawl the entire site first. It saves resources on both sides and increases the chances that your content will be interpreted correctly.

Markdown content negotiation means that when an agent requests Markdown through the Accept header, your server delivers the page in Markdown, which is already one of the easiest formats for AI models to process. No HTML overhead. No JavaScript. No navigation. Just the raw content.

3. Bot access control – who gets to do what?

The third check focuses on Content Signals in your robots.txt. Here you define which bots may use your content and for what purpose. Do you want to allow AI model training? Do you only want to appear in search answers? Or both? Content Signals let you control that precisely, and a year ago this was not even available in this form.

Our real-world test: baubetreuer.berlin from 0 to 100

Theory is useful, but real-world results matter more. We ran one of our own projects through the tool: baubetreuer.berlin, a website for a Berlin-based construction manager. The first result was poor. The site was solidly built, mobile-optimized, and fast, but it was practically invisible to AI agents. No llms.txt. No Link header. No Content Signals.

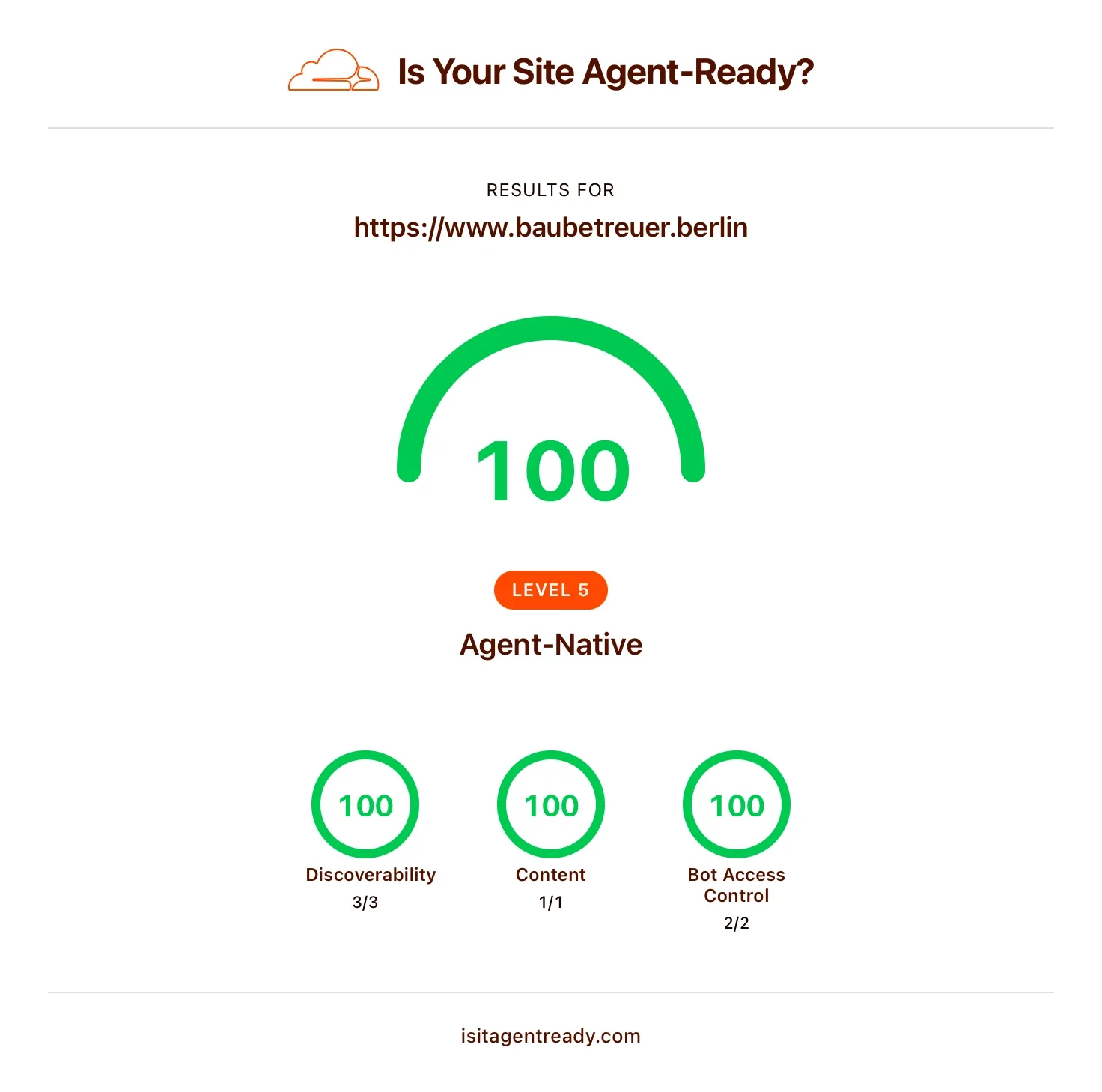

We worked through the three dimensions systematically: updated robots.txt, optimized sitemap.xml, added a Link header, generated llms.txt, set up Markdown content negotiation, and configured Content Signals. The result after a few hours of work: 100/100 points, Level 5 “Agent-Native.” Discoverability 3/3, Content 1/1, Bot Access Control 2/2.

The effort? Manageable. The impact? The website is now fully prepared for a world where AI agents are often the first stop for information. Projects like this show how practical the topic already is and how early you can build a meaningful advantage with relatively little work.

What you can do now – especially with Astro

If your website runs on Astro, you are in a great position. Astro already serves static HTML that agents can read cleanly. llms.txt and Markdown versions of your pages can be generated automatically during the build. Set it up once, and every deployment updates it for you.

Practical steps, whichever system you use:

- Test your site at isitagentready.com – free and takes less than a minute

- Check robots.txt and sitemap.xml for accuracy and completeness

- Create an llms.txt with a clean overview of your most important content

- Set up Markdown content negotiation if your hosting supports it

- Configure Content Signals to define how your content may be used

If you are already working with Astro, you can integrate all of this into an existing setup without rearchitecting anything. Other systems require a bit more work, but none of it is especially difficult.

Agent Readiness is the new SEO

When SEO first became a serious topic twenty years ago, the agencies that won were the ones that understood early how search engines actually worked. Today, we are at a similar inflection point. Over the next few years, AI agents may drive far more traffic than traditional search visitors. Prepare your website now, and you will be visible when that shift happens.

Agent Readiness does not replace SEO. It complements it. Both channels will exist side by side, and both serve the same goal: being found and understood. The difference is that AEO is still in its early stages. Standards like llms.txt are only a few months old. That is exactly what makes this the right moment to get ahead.

Conclusion

The way people find information is changing fundamentally right now. Ignore it, and you risk what poorly optimized websites experienced fifteen years ago: losing relevance even though the quality of the content stays the same. The good news is that Agent Readiness is achievable without an unreasonable amount of work, and the Cloudflare tool gives you a clear roadmap.

If you want to know where your website stands, or if you need support optimizing it, get in touch. We have already implemented this successfully for our own projects and for clients, and we will give you honest advice about what actually makes sense for your site. Just drop us a line at kontakt@creatives-berlin.de.